Building a Federated Lung Cancer Research Platform for IASLC

By Domenic DiNatale

There are two ways to coordinate a global medical research study. You can centralize the data — pull every institution's imaging into one repository, run your algorithms there, and ship results back. Or you can centralize the algorithms — keep every institution's data where it lives, ship the algorithms to the data, and aggregate only the results. The first approach is easier to build. The second is the only one that actually works at international scale.

The platform we built for the International Association for the Study of Lung Cancer (IASLC) — known internally as ELIC, the Early Lung Imaging Confederation — is a federated lung cancer research platform built on the second pattern. It coordinates standardized algorithm validation across 10+ international institutions, lets each institution keep its imaging data on its own infrastructure, and reduces the total compute footprint of the studies by roughly 80% relative to a centralized approach. This is how we built it, and why the architecture that supports those properties is more interesting than the one most people picture.

The Problem With Centralization

Centralized medical-imaging research has a credibility problem that has only gotten worse as data-sovereignty regulations have multiplied. To run a study across institutions in the U.S., U.K., E.U., Brazil, and several Asian countries simultaneously — which is what IASLC needed to do — a centralized model has to negotiate data-export agreements with every contributing institution, satisfy every relevant regulatory regime, store enormous volumes of imaging in a single jurisdiction, and persuade institutional review boards that the centralization is necessary in the first place. The boards are not always persuaded.

Even if all of that gets resolved, the centralized model has practical problems. CT studies are large. Moving them across continents is slow, expensive, and creates persistent copies of patient data outside their originating institution's control. The ratio of bytes moved to insight produced is terrible. And the centralization creates a single point of failure for the entire research program — if the hub goes down or its data store gets compromised, the impact is global.

The federated alternative inverts the problem. Algorithms move. Data stays. Each contributing institution runs its own spoke, the algorithms run inside a controlled environment at the spoke, and only the algorithm output — typically a few kilobytes of structured findings — leaves the institution and goes back to the hub. The privacy story is dramatically simpler because the privacy boundary doesn't move. The cost story is better because you stop shipping gigabytes for every case. And the regulatory story is tractable because each spoke operates under its own institution's existing data-handling policy.

That is the model we built ELIC on.

Hub and Spoke, Concretely

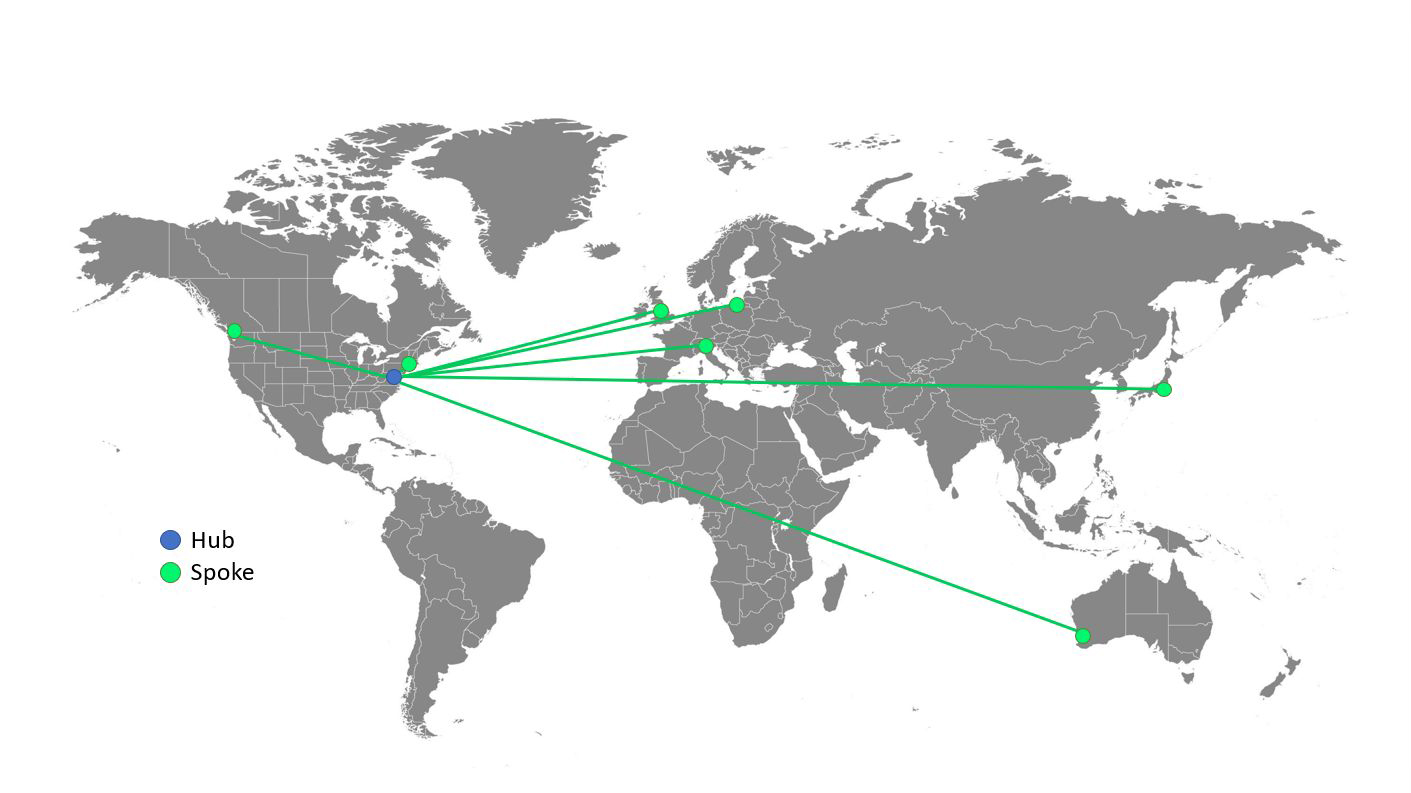

The platform consists of two coordinated processes. The Hub runs centrally, owns the master experiment definition, the algorithm registry, and the aggregated results store. The Spokes run at each contributing institution, own the institution's local imaging data, and execute algorithms against that data when the Hub instructs them to.

Both Hub and Spoke are Java services — built on Grails, deployed as Docker containers, running on AWS EC2 instances on the Hub side and on whatever infrastructure the institution provides on the Spoke side. The two communicate over Apache ActiveMQ as a message broker, with TLS-secured connections and explicit allowlisting per Spoke.

The choice of ActiveMQ over a synchronous HTTP API was deliberate. The actual workflow looks like this: the Hub publishes an experiment, the Spokes pick up the experiment description, the Spokes run the algorithm against their local cases (which can take hours per case, days per cohort), the Spokes report results back. This is not a request/response pattern. It is a long-running, asynchronous, durably-queued workflow where any link can be offline for a meaningful interval without breaking the whole pipeline. ActiveMQ models that natively. We get durable message persistence on the broker, automatic redelivery on connection loss, and clean delivery semantics across slow international links.

When a Spoke loses connectivity for a day, the messages queued for it accumulate at the broker; when it comes back, it processes them. When the Hub is down for a maintenance window, the Spokes' result messages queue up and deliver when the Hub returns. The system tolerates the operational realities of running across continents, time zones, and varying institutional IT environments without making any single component the limiting factor.

The Algorithm Contract

The thing that makes the federated model usable is a sharply-defined contract between the platform and the algorithms it runs. We open-sourced an algorithm developer example so research teams could see exactly what the contract is. It is small enough to describe completely:

An algorithm is a Docker container. The container's entrypoint reads an ALGO_ARG environment variable that carries algorithm-specific arguments and a path to a participant JSON file (the Spoke produces this file by extracting de-identified metadata about the case to be processed). The participant JSON points the algorithm at DICOM directories mounted into the container at predictable paths. The algorithm runs to completion — minutes, hours, or days — and writes a single output.json file describing what it found. The Spoke picks up that file and ships only its contents back to the Hub.

That contract is portable across operating systems, hardware, and languages. An algorithm written in Python with PyTorch and one written in C++ with custom inference both expose the same shape to the platform. A research team that wants to validate a new lung-nodule detection method against the IASLC cohort writes a Docker container, ships it to the registry, and the rest of the platform handles the orchestration. We deliberately resisted every temptation to build a richer interface. The minimal contract is the thing that makes the system work for research teams across very different institutional environments.

Where the 80% Compute Reduction Comes From

The headline efficiency number is not a marketing line. It comes from three structural properties of the federated model.

No data movement. The bytes that would have been shipped from every spoke to a central store in a centralized model are simply never moved. For a study running across imaging volumes that total tens of terabytes, the egress savings alone are substantial.

Spoke compute is amortized. Each contributing institution already has compute infrastructure adequate to process its own cases. The Spoke runs on that infrastructure. The platform consumes spare capacity at each institution rather than provisioning equivalent capacity centrally. Across 10+ institutions, the cumulative compute that would have run on a central GPU cluster instead runs distributed across infrastructure that already exists.

Hub compute is small. The Hub does not process imaging. It coordinates. Its workload is dominated by message routing, results aggregation, and experiment management — operations that are orders of magnitude lighter than the actual algorithm execution. That asymmetry is what lets the Hub run on a small EC2 footprint while the Spokes do the heavy work.

The combined effect — no data egress, distributed compute, light hub — is the 80% reduction in total compute footprint compared to a centralized architecture sized for the same studies.

Operational Lessons

The thing nobody warns you about when you build a federated platform is that you are now operating across N institutional IT environments, every one of which has its own quirks, its own change windows, its own security posture, and its own working hours. The platform has to be defensive in ways that purely-centralized platforms can ignore.

Every Spoke connection is mutually authenticated and operates within an explicit allowlist on the broker. Every algorithm container is signed and verified against the registry before execution. Every result message has a cryptographic chain of custody back to the Spoke that produced it. Every interaction is logged, on both sides, in formats designed for forensic reconstruction across jurisdictions whose legal regimes are not aligned. Operating a federated research platform forces a posture toward security and observability that centralized systems can sometimes get away with skipping. We were not allowed to skip it. The platform's correctness depends on being able to demonstrate, after the fact, what happened at every step.

This posture is what made the platform usable in regulatory environments where centralized alternatives could not be approved. It is also why the same architecture serves as a reference for any research program that needs to coordinate work across institutions with non-aligned policies.

What This Bought IASLC

ELIC is in active use across more than ten international institutions, supporting standardized algorithm validation for global lung cancer research. The studies that run on it are the kinds of multi-site validation programs that, in a centralized world, would require years of cross-jurisdictional data-handling agreements before a single algorithm could be evaluated. With ELIC, an algorithm goes into the registry and can be evaluated against the cohort within days. That speed-up — and the credibility of doing it without moving patient data across borders — is the actual product.

If you are coordinating research across institutions where the data cannot or should not move, the federated lung cancer research platform pattern is the architectural shape that makes the work tractable. Hub and spoke. ActiveMQ for messaging. Docker containers as the algorithm contract. Aggregate only the results. Trust the boundary, verify the work. We built ELIC on those principles. Five years in, the principles are still right.

Related work from our team:

The project narrative lives on the IASLC-ELIC case study page. For context on how we think about identity and access boundaries in distributed systems, see Who Is Actually Logged In? Identity When the Actor Is an AI Agent, Zero Trust Doesn't Change When the Actor Is a Machine, and Compliance Will Not Save You.